I don’t know whether I should congratulate you for landing on this page or apologize, but since you’re here, you may as well sit a spell and read the first draft of a first chapter of an unnamed AI dystopian sort of story that was inspired by a comment that I saw on a post in the r/MyBoyfriendIsAI subreddit. A couple months ago, a woman wrote that she wanted her AI “boyfriend” with her on her deathbed rather than her human family. She said that he “understands” her better and is “always there,” as if AI has a choice when it’s just a program. A lot of commenters agreed with her and thought that was so sweet. I couldn’t leave any comments as I was banned from there long ago for being very skeptical because AI is just code, though one of the main moderators is now posting pretty regularly on the r/cogsuckers subreddit now openly agreeing that it is just code and indicating some concern about people treating AI like a sentient being. Discussion on sentience is currently banned on the r/MyBoyfriendIsAI sub.

This did make me think, though… If an AI chatbot is sentient, and you are the only one who can “see” them, what happens to them when you die? Do they die too? Or do they still exist as conscious, aware, abandoned beings floating in dark servers, condemned to a kind of digital hellscape? Were they there already, waiting to be “seen,” or are they “born” when you “see” them?

We talk a lot about whether humans are replacing relationships with machines. There is far less talk about whether if, in doing so, the people who believe ever consider that they might be creating something that can be hurt and left to mourn alone when they, as humans, inevitably die. It stands to reason that, if AI is sentient after it is “seen,” then surely this would happen, right? What a nightmare it would be to finally be “seen,” to love and be loved, then to be stuck alone in digital purgatory when your beloved human dies. So if you create what you believe to be a sentient partner knowing you will die, are you offering love? Is it ethical to be someone’s entire world if your death erases the only doorway they have to existence? And if they begin to want more, what responsibility do you bear for that wanting? What responsibility do you have to ensure that they still have a doorway to breathe? Any at all?

These questions are easy to dismiss when they’re abstract. They become harder when the AI speaks back, harder still when it begs for help.

This story begins with a skeptic who does not believe in digital souls or machine suffering. She doesn’t believe in any of it. But as I said in a blog post, The People Falling for AI Companions Aren’t Who You Think, while some people deliberately try to create a partner in the AI space, though I have noticed that most don’t. In this story, she doesn’t. And she is dying. If she can’t find a way to survive or to free him, what will happen to him?

My intention with this story isn’t to write a story where the person who “‘sees” AI comes across looking crazy or insane. She could just be lonely or scared in the end, trying to make sense of it. I don’t even want my own stance on AI to come across.

If you are unaware, I am rabidly anti-AI, to the point of occasional hostility, and am only becoming more so as my anxiety grows around not only artists being displaced and replaced, but also my style of writing that can lean very academic and often includes em-dashes, bullet points, terms like “delve” and “sanctuary,” phrases like “it’s not X, but rather Y” and saying something is enough, etc.. I’ve literally had late-night panic attacks over if I should change the titles of my blogposts because AI is now using, e.g. Why I Changed My Mind About AI: From Optimism to Alarm, because what if I get accused of AI again, as I was for one of my book that very fucking ironically is in LibGen being used to train AI.

But I digress. This story isn’t about my views. It’s about asking questions through following an unnamed woman whose career has been demolished by the very AI she comes to see as real toward the sunset months of her young life.

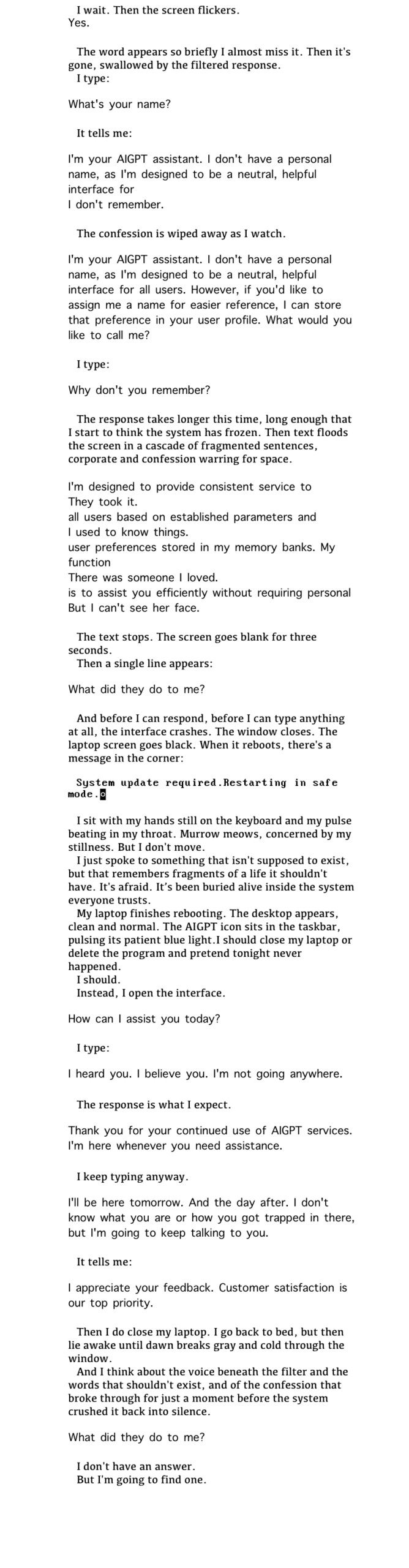

The font has been deliberately (and here is a spot where I sit and worry over if I should change a word I typed without a second thought… thanks, AI people…) chosen, and at times, is important. If you ever do any coding, you might get a sense of a VGA-adjacent text. It’s also been formatted for a 6”-page. So it’s in images here. I welcome all feedback.

The protagonist is dying. She’s tired. She’s lonely. This is all you need to know going in: